I remember I used to watch this guy's videos, and the icon for the image viewer in serenity was pepe the frog. And he also admitted to browsing 4chan. And he changed his twitter link to x.com before even twitter changed it. Also it was kinda weird that he had some private discord channels whose contents he was very secretive of. Now that he's making a nonprofit with github's former CEO, there is absolutely zero barriers to the exact same bullshit from all the companies he complains about.

TechTakes

Big brain tech dude got yet another clueless take over at HackerNews etc? Here's the place to vent. Orange site, VC foolishness, all welcome.

This is not debate club. Unless it’s amusing debate.

For actually-good tech, you want our NotAwfulTech community

ah fuck I didn't know Ladybird had those people involved (was only aware of project existence and reasons), fuck :<

well this fucken sucks. I was rooting for the dude and his projects.

Couldn't find the way to turn this into a pithy blog post so just dumping it here:

does anyone else feel that the rationalists want a future of a billion trillion virtual humans, each and every one with an immutable gender bit set?

it is a little entertaining to hear them do extended pontifications on what society would look like if we had pocket-size AGI, life-extension or immortality tech, total-immersion VR, actually-good brain-computer interfaces, mind uploading, etc. etc. and then turn around and pitch a fit when someone says "okay so imagine if there were a type of person that wasn't a guy or a girl"

Shouldn't they be fans of The Culture? And didn't The Culture have people changing gender for any reason (including curiosity), and it was accepted?

(It was years since I read those books, so I could confuse it with something else.)

the Culture humans accepted being subsumed into a Mind after 400 years, so Yudkowsky disapproves. He also dislikes The Minds.

What is it with Rats extolling The Player of Games above other Culture novels? It's the one HN likes best too. It's probably the only one I've not re-read. Maybe it's how the main character is kinda seduced by the parody of patriarchal capitalism in the culture he's coerced to infiltrate.

Personally I think Use of Weapons is the best one.

Personally haven’t seen a headline about Ol’ Billy Boy ever since word got around that he was a diamond medallion member of the lolita express airlines. William Gatorade thinks AI’s got what climate craves, i.e. waste heat.

Gates also mentioned that AI will be a good force in providing better health care and tackling climate change, in particular by calling nuclear fusion energy a clean alternative to fossil fuels.

Ah yes, fusion. With the wealth of data we have from - checks notes - stars and bombs, the applied statistics machines will surely be able to extrapolate working fusion reactors.

Don't know what we need Gates for. Surely an AI should be able to spout this bullshit?

i think that openai also wanted to solve their problems with fusion, but they got a step further, they made a startup for this. not normal nuclear power plant hot rock machine, no, they want tech that is perpetually Just A Decade Away. it makes some perverse sense if your funding is dependent on misguided hype only

fusion research is just thinnest disguise for thermonuclear weapons research, especially the inertial confinement fusion variety

Don’t know what we need Gates for. Surely an AI should be able to spout this bullshit?

Ugh, so many people are working the "AI will solve X problem" mill. I don't need nor want AI to be there increasing output.

Eh, there’s a chance that machine learning might help here… there’s some interesting stuff come out of that area of research, like radio antennae and rocket engines and so on, but I’d bet anything that a) no LLMs were involved and none ever will be, and b) “ai” only appears in marketing copy and funding pitches.

dunno about rockets, but antenna thingy works only because you can simulate performance of antenna very reliably, precisely and quickly. This data was fed back, random small changes were made, things that were an improvement passed to the next iteration. Not sure how this approach is called but none of it is LLM

"genetic algorithm" - something I hadn't previously seen branded as "AI", but I guess I'm not surprised

yeah that's it, forgot a word for it https://en.wikipedia.org/wiki/Evolved_antenna

that ST5 antenna looks like a low-poly two turn helical antenna, but how it looks like will be a function of design requirements

GAs went out of the limelight before the rest of the beaus of current “AI” branding came to be, suspect that might be part of it

(Another suspicion is that it’s because they’re…fairly observable, ito operation? So it’s far less easily claimable that one of these has gained sentience, or all the other dumb bullshit that the cluster has spun in recent years)

Why is he carrying books and DVDs on a baking sheet?

He has a smart oven with AI and wants to feed it data?

FEED ME, ~~SEYMOUR~~ BILLY!

One is by David Brooks, so it's guaranteed to be half baked?

the faster training data gets polluted the faster ai companies get fucked. therefore, I propose the deliberate creation of unmarked ai compost piles on reddit and discord: "communities" managed so as to minimize visibility to humans while generating large quantities of shit data

We could just mix corporate and bot responses to all content at a 99:1 ratio so the AI companies struggle to tell the difference. Also no need to do anything, as this is running on reddit right now.

if this were running you would be unlikely to know about it. the novel part is not spamming reddit, it's trying to do so strictly to target ai companies, without humans ever seeing the result

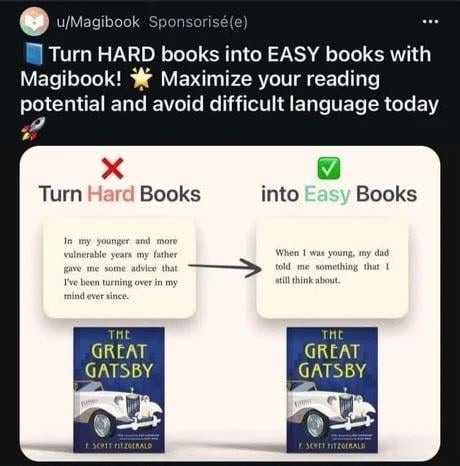

So this is apparently something AI companies now think is smart to advertise with. Don’t know who’d willingly consider this something targeted at them, but here we are.

ah yes, the Simple English wiki filter but wrong

I rewrote the ad so they can lean into their marketing strategy.

Hard book have hard word and make head hurt, AI make book easy! More book read for you. No hard word. This good idea!

I want to make a zoolander riff but my brain just isn't cooperating, so instead pretend I did (just like these people pretend their product is worth something)

I can't even

It's good that they're offsetting their advantages in the marketplace of money by actively crippling themselves in the marketplace of ideas.

the part of me that sometimes spends an hour just choosing the right words for the couple of paragraphs I’m writing is fucking screaming

…

there is a screaming noise that comes from me while I write

thanks magibook!

can’t wait for this shit to show up in usage among people who have english as an additional language at different level of fluency to their primaries. totally don’t see it causing a cascade clusterfuck in communication and comprehension.

there’s already a strain of this out there, with a lot of english-non-first students using LLMs to “make their work sound more polished”. similar as the strain using it for CVs etc

ran across a great thread on fedi a while back, will see if I can find it

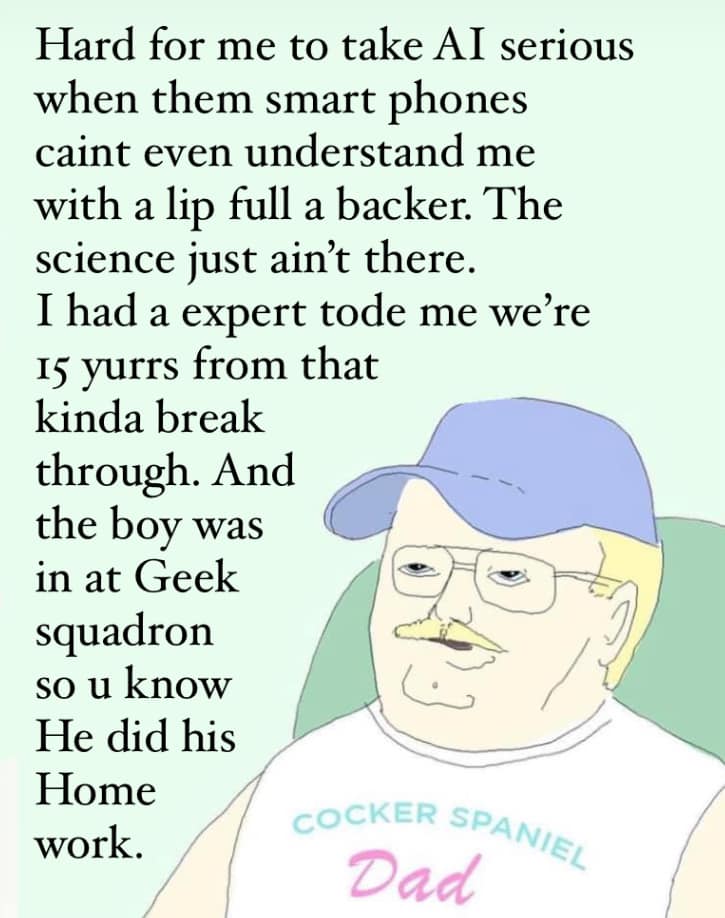

When my local civilians get drawn into the conflict

^via^ ^Little^ ^Bubby^ ^Child^

I could hear this while reading it ahaha

Right?!

Being far from home at the moment, I really appreciate Bubby's mountain vibes. Feels like a trip to Granny's house.

Dan Luu's "A discussion of discussions on AI bias", about techbros trying to gaslight the rest of the world into thinking ML models don't have problems

god damn those recasting images

it will never not be hilarious how pathetic this technology/approach is

Another example which doesn't make a good viral news story is my not being able to put my Vietnamese name in the title of my blog and have my blog indexed by Google outside of Vietnamese-language Google — I tried that when I started my blog and it caused my blog to immediately stop showing up in Google searches unless you were in Vietnam. It's just assumed that the default is that people want English language search results and, presumably, someone created a heuristic that would trigger if you have two characters with Vietnamese diacritics on a page that would effectively mark the page as too Asian and therefore not of interest to anyone in the world except in one country.

the entire post is very good, but my brain zeroed in on this as both a perfect example of why search was absolutely fucked even before LLMs (who in fuck deploys a language heuristic that doesn’t take the content of the page into account? who asked for this?) and of the engineering attitudes that feed into LLMs and generative AI having unevaluated biases and defenders that insist those biases can’t be real